In partnership with

Gemini Omni leaked this weekend ahead of Google I/O. Strong editing capabilities. Weaker raw generation than Seedance. The pattern reveals where AI video is heading next.

Reading this in Promotions? Move it to Primary so you never miss an issue.

| Luxe Prompting |

ISSUE 28 MAY 2026 |

|

Google’s next

AI video model

just leaked.

|

|

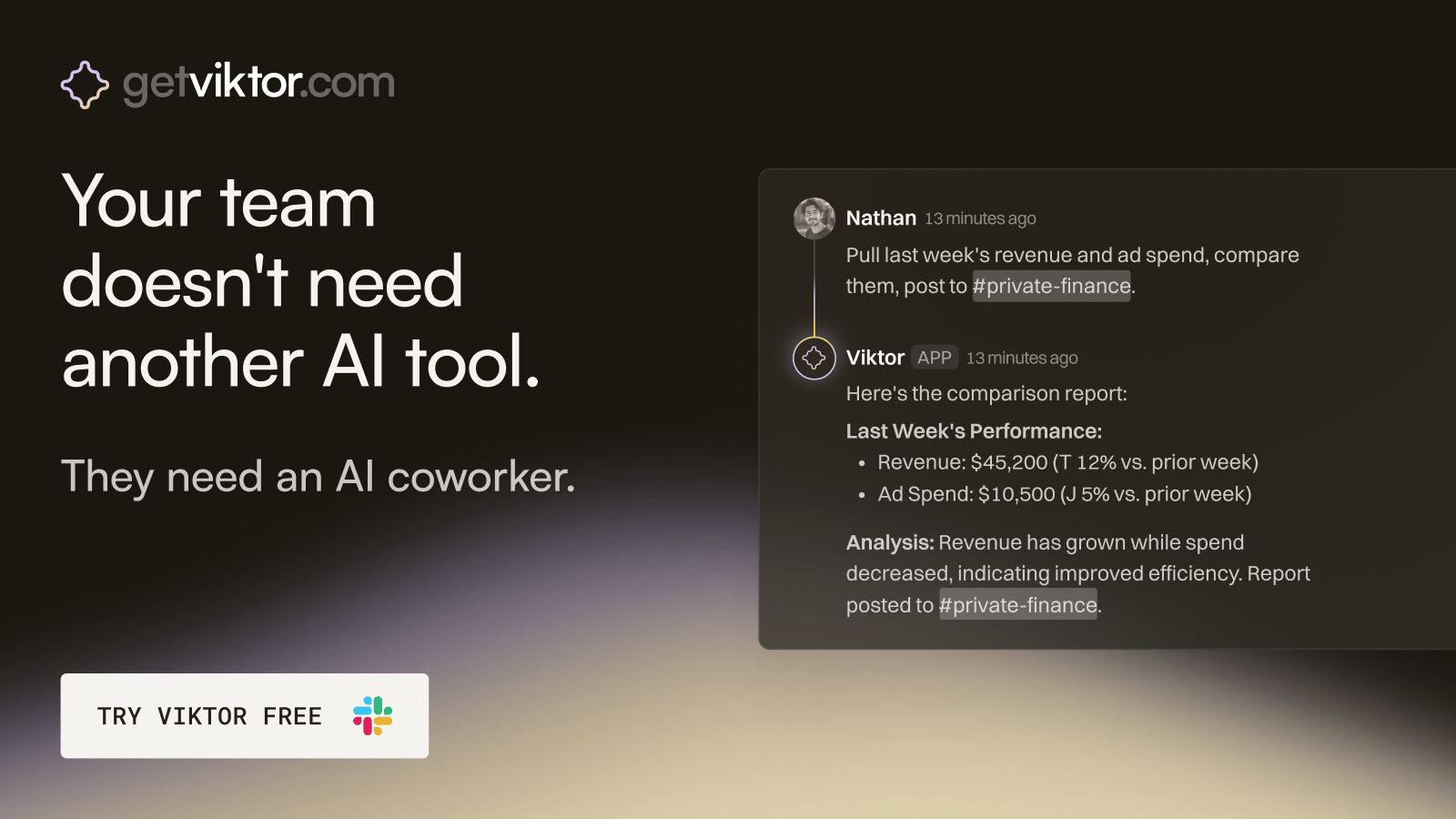

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

|

•••

|

|

Google accidentally exposed its next AI video model over the weekend. A revised Gemini interface briefly surfaced a model card describing Gemini Omni, a unified video system that creators can use to generate clips, remix videos, edit directly in chat, and apply templates. The screenshots came from Reddit users who caught what looks like a limited A/B test or an early rollout that escaped containment. Google I/O is on May 19 and 20. The timing is not accidental.

The headline most outlets are running with is that Omni's raw video quality appears to lag behind ByteDance's Seedance 2, which currently leads the benchmark race. Viewers comparing early Omni outputs noticed weaker cinematic quality and less natural motion than what Seedance has been producing for months. That is a true observation. It is also the least interesting part of the story.

The interesting part is what Omni does that no one else does well. Object swapping inside existing clips. Scene rewriting through chat instructions. Watermark removal that does not break the footage around it. The editing capabilities are reportedly the strongest in the category. For creators, that distinction matters more than raw generation quality, and the pattern Google is running here is one worth understanding before the rest of the industry catches up.

|

|

The Leak

What the screenshots actually showed.

|

|

The leaked model card carried a single sentence pitch. Create with Gemini Omni. Meet our new video model. Remix your videos. Edit directly in chat. Try templates. And more. Brief enough to be a placeholder, specific enough to confirm that Google is positioning Omni as a creative tool rather than a research demo.

Users also spotted a new usage limits tab inside settings, suggesting a metered system similar to what Google has been testing across other Gemini surfaces. Several reports indicated that video generation burned through credits quickly, which fits the pattern of a tiered rollout where entry-level users get a small monthly allocation and heavier users pay for more capacity. Two tier variants likely ship at launch, probably Flash and Pro, with the outputs circulating now coming from the Flash tier.

Editing requests through chat appeared to work unusually well for a first public glimpse. Remove the watermark from this clip. Replace the person walking with a different person. Rewrite the scene to take place at night. These are the prompt patterns the leaked interface seemed to support. None of them are common in current AI video tools, where the workflow is still mostly generate, accept, or regenerate.

|

|

The Pattern

Google is running the Nano Banana playbook again.

|

|

A year ago, Google quietly shipped a native image model inside Gemini called Nano Banana. The initial reviews were lukewarm. Raw generation quality was middling. Compositions were less interesting than what FLUX or Midjourney were producing. Most coverage treated Nano Banana as a placeholder and moved on.

Then Nano Banana topped the editing leaderboards. The model could not always generate the most beautiful image, but it could take any image and modify it through natural language more effectively than any other tool on the market. Within months, Google quietly upgraded it into a frontier image system. By early 2026, Nano Banana 2 was ranked second on the Artificial Analysis arena, sitting just behind GPT Image 2 and ahead of every other image model. The launch positioning had been a feint. The real strategy was editing first, generation second.

Gemini Omni looks like the same playbook applied to video. Launch with strong editing capabilities. Accept that raw generation will trail behind the benchmark leaders at first. Use the integration into the broader Gemini ecosystem to gather usage data and improve the model over time. By the second or third version, the generation quality catches up and the editing lead compounds. Whether this strategy works again depends on factors Google does not fully control, but the pattern is clear enough that creators should be watching for it.

|

|

What It Means for Creators

The shift from generation-first to editing-first.

|

|

For most of the last two years, the question creators asked about AI video was the same question they asked about AI images. Which model generates the most beautiful output. The answer kept changing because the leaders kept changing. Runway. Pika. Sora. Kling. Veo. Seedance. Every few months a new model would top the rankings and the conversation would reset.

Gemini Omni hints at a different question becoming more important. Which model lets you edit what you generate. The shift matters because most professional creator work is not single-shot generation. It is iteration. A clip needs the background changed. A character's outfit is off. The pacing of the cut is off. The watermark on the source needs to come out. These are the problems that determine whether AI video is usable for actual client work, and they are problems that current video models handle badly.

If Google ships Omni with strong editing capabilities and even respectable generation quality, the workflow advantage tilts toward Gemini for the kind of multi-step video work that defines professional creator output. Other companies will race to match. Runway, Kling, and Pika already have early editing features but nothing as integrated as what Omni appears to deliver. The category may be heading toward editing-first as the default, which would be a fundamental shift in how AI video tools compete.

|

|

What to Watch Next Week

Three signals from Google I/O.

|

|

Google I/O kicks off Tuesday May 19 at 10 a.m. Pacific, with the Developer Keynote later that afternoon. Sessions continue on May 20. The full event streams at io.google for anyone who wants to watch live. Three things to watch for that matter for creators.

One. The Omni official launch. Whether Google confirms the leaked features, whether the editing capabilities are as strong as the screenshots suggested, and whether Flash and Pro tiers ship at the same pricing tiers as the current Gemini stack.

Two. Gemini 4 or a major Gemini upgrade. A new flagship model is widely expected. The strongest signal will be whether the new model includes native video capabilities or keeps video as a separate Omni surface.

Three. Veo 4. Engadget and others have floated this as a possibility. If Google ships both a new Omni video model and a Veo 4 update, the two-track approach signals that Google sees consumer video and professional video as different markets. That distinction would shape every video tool strategy for the rest of the year.

|

|

For now, the takeaway is to stop benchmarking AI video tools purely on raw generation quality. The conversation is moving to editing capability and integration with the tools creators already use. Google is making that shift visible early. The companies that follow will be the ones that matter most for professional creator work over the next twelve months.

If you are picking an AI video tool for a long-term workflow this week, the strongest move may be to wait. I/O is six days away. The category is about to reshape. Committing to a specific tool right now is locking in ahead of an announcement that may change the math.

|

|

•••

I am writing a full creator recap of Google I/O for the morning after the keynote. Every announcement that matters for image, video, voice, and workflow, with the practical takeaway for each one.

Want it when it ships? Reply with send me the I/O recap and I will get it to you.

|

|

A QUESTION FOR YOU

Are you generating or editing more in your AI video work?

Reply and tell me. The replies determine which capabilities I dig into next, and whether editing-first workflows or generation-first workflows get the deeper coverage.

If this issue resonated, forward it to a creator who works in AI video.

|

|

Until next time,

Luxe Prompting

|

|

Luxe Prompting

AI Image Generation for Creators

|

|